| Section categories |

|

Related Subjects [38]

This category includes brief overview of all related subjects.

|

|

Defining BioInformatics [7]

In this section we tried to briefly explain what bioinformatics is ?

|

|

Unviersities [30]

This contains information about universities that are offering bioinformatics degree programs.

|

|

Resources [24]

Contains information about bioinformatics resources including databases, tools and techniques.

|

|

Algorithms [31]

This category includes some of the basic algorithms that are usually used by bioinformaticians.

|

|

| Statistics |

Total online: 1 Guests: 1 Users: 0 |

|

Home » 2011 » August » 22 » Genetic Algorithms 2

11:22 PM Genetic Algorithms 2 |

Genetic Algorithms

6. Parent/Survivor Selection: - Strategies

- Always keep the best one

- Elitist: deletion of the K worst

- Probability selection : inverse to their fitness

- Etc.

- Too strong fitness selection bias can lead to sub-optimal solution

- Too little fitness bias selection results in unfocused and meandering search

- Parent selection

- Chance to be selected as parent proportional to fitness

- To avoid problems with fitness function

- Not a very important parameter

- Uniform randomly selection

- Probability selection : proportional to their fitness

- Tournament selection (Multiple Objectives)

- Build a small comparison set Randomly select a pair with the higher rank one beats the lower one Non-dominated one beat the dominated one Niche count: the number of points in the population within� certain distance, higher the niche count, lower the rank.

- Etc.

- Others

- Global Optimal

- Parameter Tuning

- Parallelism

- Random number generators

7. Example Of Coding For TSP: - Binary

- Cities are binary coded; chromosome is string of bits

- Most chromosomes code for illegal tour

- Several chromosomes code for the same tour

- Path

- Cities are numbered; chromosome is string of integers

- Most chromosomes code for illegal tour

- Several chromosomes code for the same tour

- Ordinal

- Cities are numbered, but code is complex

- All possible chromosomes are legal and only one chromosome for each tour

- Several others

8. Roulette wheel: - Sum the fitness of all chromosomes, call it T

- Generate a random number N between 1 and T

- Return chromosome whose fitness added to the running total is equal to or larger than N

- Chance to be selected is exactly proportional to fitness

Chromosome : 1 2 3 4 5 6 Fitness : 8 2 17 7 4 11 Running total : 8 10 27 34 38 49 N (1 £ N £ 49): 23 Selected : 3

9. Tournament:

- Binary tournament

- Two individuals are randomly chosen; the fitter of the two is selected as a parent

- Probabilistic binary tournament

- Two individuals are randomly chosen; with a chance p, 0.5<p<1, the fitter of the two is selected as a parent

- Larger tournaments

- n individuals are randomly chosen; the fittest one is selected as a parent

- By changing n and/or p, the GA can be adjusted dynamically

10. Problems With Fitness Range: - Premature Convergence

- DFitness too large

- Relatively superfit individuals dominate population

- Population converges to a local maximum

- Too much exploitation; too few exploration

- Slow Finishing

- DFitness too small

- No selection pressure

- After many generations, average fitness has converged, but no global maximum is found; not sufficient difference between best and average fitness

- Too few exploitation; too much exploration

11. Solutions For These Problems: - Use tournament selection

- Implicit fitness remapping

- Adjust fitness function for roulette wheel

- Explicit fitness remapping

- Fitness scaling

- Fitness windowing

- Fitness ranking

12. Fitness Function: - Purpose

- Parent selection

- Measure for convergence

- For Steady state: Selection of individuals to die

- Should reflect the value of the chromosome in some "real” way

- Next to coding the most critical part of a GA

- Fitness scaling

- Fitness values are scaled by subtraction and division so that worst value is close to 0 and the best value is close to a certain value, typically 2

- Chance for the most fit individual is 2 times the average

- Chance for the least fit individual is close to 0

- Problems when the original maximum is very extreme (super-fit) or when the original minimum is very extreme (super-unfit)

- Can be solved by defining a minimum and/or a maximum value for the fitness

- Example of Fitness Scaling

- Fitness windowing

- Same as window scaling, except the amount subtracted is the minimum observed in the n previous generations, with n e.g. 10

- Same problems as with scaling

- Fitness Ranking

- Individuals are numbered in order of increasing fitness

- The rank in this order is the adjusted fitness

- Starting number and increment can be chosen in several ways and influence the results

- No problems with super-fit or super-unfit

- Often superior to scaling and windowing

- Fitness Evaluation

- A key component in GA

- Time/quality trade off

- Multi-criterion fitness

- Multi-Criterion Fitness

- Dominance and Indifference

- For an optimization problem with more than one objective function (fi, i=1,2,…n)

- given any two solution X1 and X2, then

- X1 dominates X2 ( X1 X2), if

fi(X1) >= fi(X2), for all i = 1,…,n

- X1 is indifferent with X2 ( X1 ~ X2), if X1 does not dominate X2, and X2 does not dominate X1

- Pareto Optimal Set

- If there exists no solution in the search space which dominates any member in the set P, then the solutions belonging the the set P constitute a global Pareto-optimal set.

- Pareto optimal front

- Dominance Check

- Weighted sum

- F(x) = w1f1(x1) + w2f2(x2) +…+wnfn(xn)

- Problems?

- Convex and convex Pareto optimal frontSensitive to the shape of the Pareto-optimal front

- Selection of weights?

- Need some pre-knowledge

- Not reliable for problem involving uncertainties

- Optimizing single objective

- Maximize: fk(X) Subject to: fj(X) <= Ki ,i <> k , X in F where F is the solution space.

- Preference based weighted sum

- �(ISMAUT Imprecisely Specific Multiple Attribute Utility Theory)

- F(x) = w1f1(x1) + w2f2(x2) +…+wnfn(xn)

- Preference

- Given two know individuals X and Y, if we prefer X than Y, then� F(X) > F(Y), �that is� w1(f1(x1)-f1(y1)) +…+wn(fn(xn)-fn(yn)) > 0

- All the preferences constitute a linear space Wn={w1,w2,…,wn}

- w1(f1(x1)-f1(y1)) +…+wn(fn(xn)-fn(yn)) > 0

- w1(f1(z1)-f1(p1)) +…+wn(fn(zn)-fn(pn)) > 0, etc

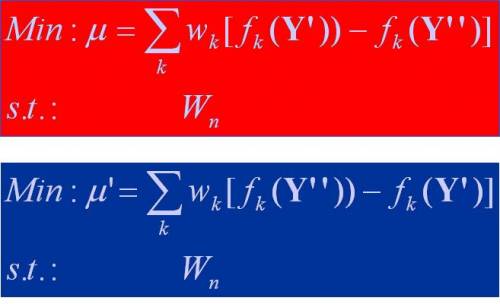

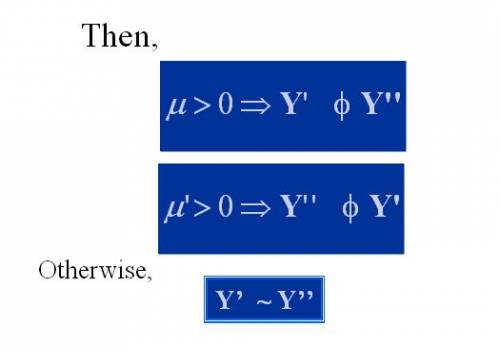

- For any two new individuals Y’ and Y’’, how to determine which one is more preferable?

Construct the dominant relationship among some indifferent ones according to the preferences.

|

|

|

Category: Algorithms |

Views: 1436 |

Added by: Ansari

| Rating: 0.0/0 |

|

|

|